Publications

-

Stochastic Krasnoselskii-Mann Iterations: Convergence without Uniformly Bounded VarianceDaniel Cortild, and Coralia CartisarXiv preprint arXiv:2604.22581, Apr 2026

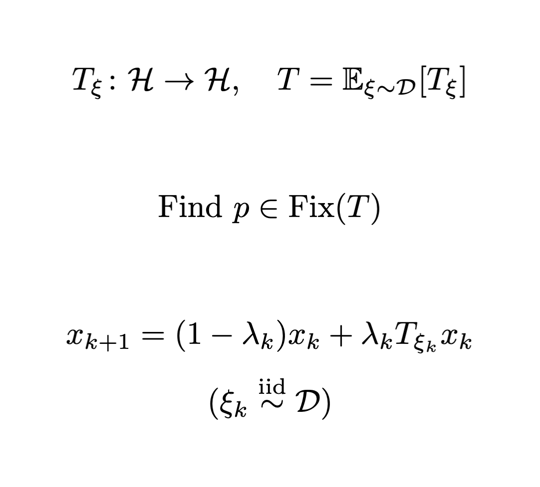

Stochastic Krasnoselskii-Mann Iterations: Convergence without Uniformly Bounded VarianceDaniel Cortild, and Coralia CartisarXiv preprint arXiv:2604.22581, Apr 2026We investigate the Stochastic Krasnoselskii-Mann iterations for expected nonexpansive fixed-point problems in a real Hilbert space. We establish convergence guarantees under significantly weaker assumptions on the variance than those typically used in the literature. In particular, instead of a uniform bound on the variance of the stochastic oracle, we only assume finite variance at a single fixed point. Under this assumption, we prove almost sure weak convergence of the iterates, derive convergence rates for the expected residual, and obtain almost sure convergence rates for the running minimum residual. Notably, we recover the best-known stochastic oracle complexity without imposing uniformly bounded variance. We illustrate the applicability of our results to Stochastic Gradient Descent, where we recover known guarantees, and to Stochastic Three-Operator Splitting, for which we obtain the first results that avoid uniform variance bounds.

@article{cortild_stochastic_2026, title = {{Stochastic Krasnoselskii-Mann Iterations: Convergence without Uniformly Bounded Variance}}, author = {Cortild, Daniel and Cartis, Coralia}, journal = {arXiv preprint arXiv:2604.22581}, month = apr, year = {2026}, } -

Regularization Methods for Solving Hierarchical Variational Inequalities with Complexity GuaranteesDaniel Cortild, Meggie Marschner, and Mathias StaudiglarXiv preprint arXiv:2512.20772, Dec 2025

Regularization Methods for Solving Hierarchical Variational Inequalities with Complexity GuaranteesDaniel Cortild, Meggie Marschner, and Mathias StaudiglarXiv preprint arXiv:2512.20772, Dec 2025We consider hierarchical variational inequality problems, or more generally, variational inequalities defined over the set of zeros of a monotone operator. This framework includes convex optimization over equilibrium constraints and equilibrium selection problems. In a real Hilbert space setting, we combine a Tikhonov regularization and a proximal penalization to develop a flexible double-loop method for which we prove asymptotic convergence and provide rate statements in terms of gap functions. Our method is flexible, and effectively accommodates a large class of structured operator splitting formulations for which fixed-point encodings are available. Finally, we validate our findings numerically on various examples.

@article{cortild_regularization_2025, title = {{Regularization Methods for Solving Hierarchical Variational Inequalities with Complexity Guarantees}}, author = {Cortild, Daniel and Marschner, Meggie and Staudigl, Mathias}, journal = {arXiv preprint arXiv:2512.20772}, month = dec, year = {2025}, } -

Global Optimization Algorithm through High-Resolution SamplingTransactions on Machine Learning Research, Sep 2025

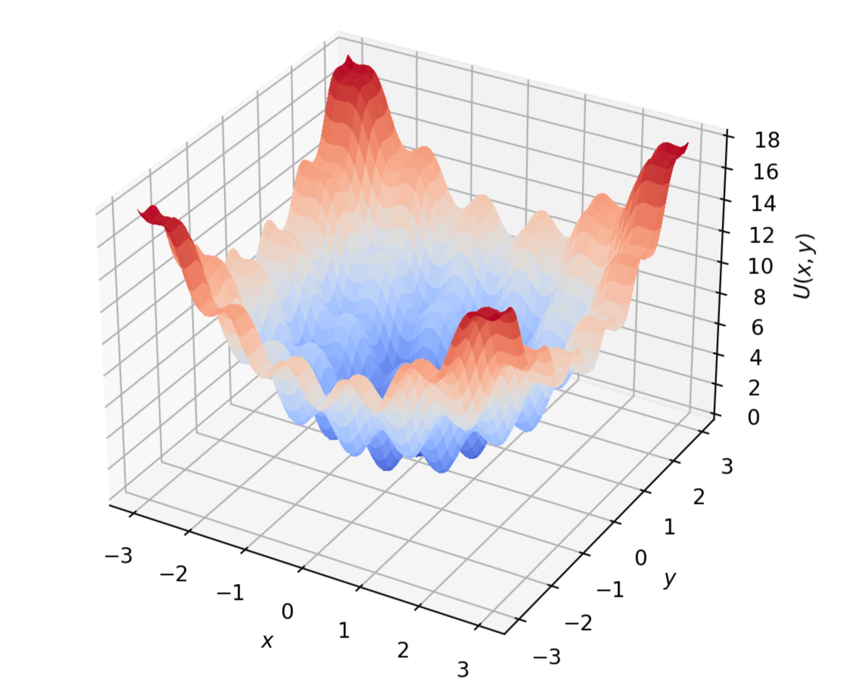

Global Optimization Algorithm through High-Resolution SamplingTransactions on Machine Learning Research, Sep 2025We present an optimization algorithm that can identify a global minimum of a potentially nonconvex smooth function with high probability, assuming the Gibbs measure of the potential satisfies a logarithmic Sobolev inequality. Our contribution is twofold: on the one hand we propose a global optimization method, which is built on an oracle sampling algorithm producing arbitrarily accurate samples from a given Gibbs measure. On the other hand, we propose a new sampling algorithm, drawing inspiration from both overdamped and underdamped Langevin dynamics, as well as from the high-resolution differential equation known for its acceleration in deterministic settings. While the focus of the paper is primarily theoretical, we demonstrate the effectiveness of our algorithms on the Rastrigin function, where it outperforms recent approaches.

@article{cortild_global_2025, title = {{Global Optimization Algorithm through High-Resolution Sampling}}, author = {Cortild, Daniel and Delplancke, Claire and Oudjane, Nadia and Peypouquet, Juan}, journal = {Transactions on Machine Learning Research}, month = sep, year = {2025}, } -

Last-Iterate Complexity of SGD for Convex and Smooth Stochastic ProblemsarXiv preprint arXiv:2507.14122, Jul 2025

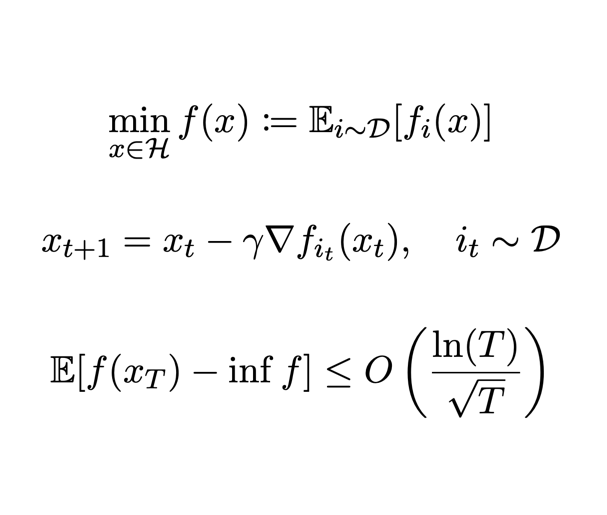

Last-Iterate Complexity of SGD for Convex and Smooth Stochastic ProblemsarXiv preprint arXiv:2507.14122, Jul 2025Most results on Stochastic Gradient Descent (SGD) in the convex and smooth setting are presented under the form of bounds on the ergodic function value gap. It is an open question whether bounds can be derived directly on the last iterate of SGD in this context. Recent advances suggest that it should be possible. For instance, it can be achieved by making the additional, yet unverifiable, assumption that the variance of the stochastic gradients is uniformly bounded. In this paper, we show that there is no need of such an assumption, and that SGD enjoys a \tilde O(T^-1/2) last-iterate complexity rate for convex smooth stochastic problems.

@article{garrigos_lastiterate_2025, title = {{Last-Iterate Complexity of SGD for Convex and Smooth Stochastic Problems}}, author = {Garrigos, Guillaume and Cortild, Daniel and Ketels, Lucas and Peypouquet, Juan}, month = jul, year = {2025}, journal = {arXiv preprint arXiv:2507.14122}, } -

Bias-Optimal Bounds for SGD: A Computer-Aided Lyapunov AnalysisarXiv preprint arXiv:2505.17965, May 2025

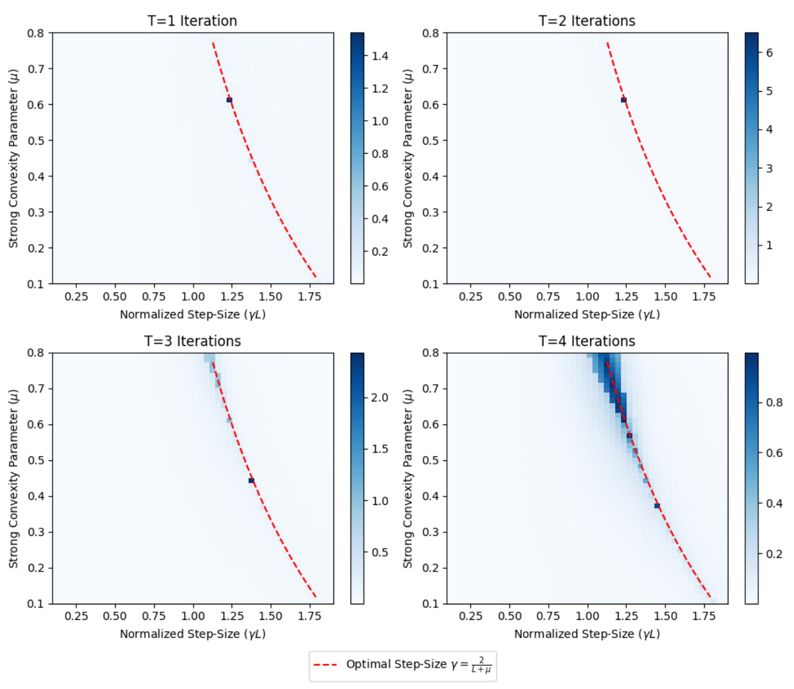

Bias-Optimal Bounds for SGD: A Computer-Aided Lyapunov AnalysisarXiv preprint arXiv:2505.17965, May 2025The non-asymptotic analysis of Stochastic Gradient Descent (SGD) typically yields bounds that decompose into a bias term and a variance term. In this work, we focus on the bias component and study the extent to which SGD can match the optimal convergence behavior of deterministic gradient descent. Assuming only (strong) convexity and smoothness of the objective, we derive new bounds that are bias-optimal, in the sense that the bias term coincides with the worst-case rate of gradient descent. Our results hold for the full range of constant step-sizes γL ∈(0,2), including critical and large step-size regimes that were previously unexplored without additional variance assumptions. The bounds are obtained through the construction of a simple Lyapunov energy whose monotonicity yields sharp convergence guarantees. To design the parameters of this energy, we employ the Performance Estimation Problem framework, which we also use to provide numerical evidence for the optimality of the associated variance terms.

@article{cortild_new_2025, title = {{Bias-Optimal Bounds for SGD: A Computer-Aided Lyapunov Analysis}}, author = {Cortild, Daniel and Ketels, Lucas and Peypouquet, Juan and Garrigos, Guillaume}, month = may, year = {2025}, journal = {arXiv preprint arXiv:2505.17965}, } -

Krasnoselskii–Mann Iterations: Inertia, Perturbations and ApproximationDaniel Cortild, and Juan PeypouquetJournal of Optimization Theory and Applications, Jan 2025

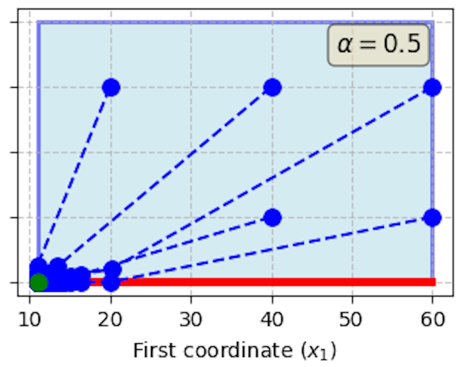

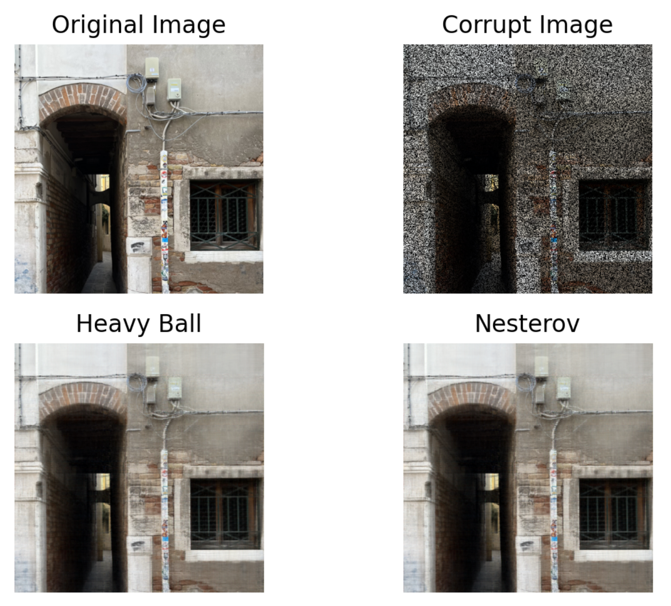

Krasnoselskii–Mann Iterations: Inertia, Perturbations and ApproximationDaniel Cortild, and Juan PeypouquetJournal of Optimization Theory and Applications, Jan 2025This paper is concerned with the study of a family of fixed point iterations combining relaxation with different inertial (acceleration) principles. We provide a systematic, unified and insightful analysis of the hypotheses that ensure their weak, strong and linear convergence, either matching or improving previous results obtained by analysing particular cases separately. We also show that these methods are robust with respect to different kinds of perturbations–which may come from computational errors, intentional deviations, as well as regularisation or approximation schemes–under surprisingly weak assumptions. Although we mostly focus on theoretical aspects, numerical illustrations in image inpainting and electricity production markets reveal possible trends in the behaviour of these types of methods.

@article{cortild_krasnoselskii_2025, title = {{Krasnoselskii--Mann Iterations: Inertia, Perturbations and Approximation}}, author = {Cortild, Daniel and Peypouquet, Juan}, journal = {Journal of Optimization Theory and Applications}, volume = {204}, number = {2}, pages = {35}, month = jan, year = {2025}, publisher = {Springer}, doi = {10.1007/s10957-024-02600-5}, }